A Basic Game Architecture

So we got our Android application up and running but you might be wondering what type of application is exactly a game.

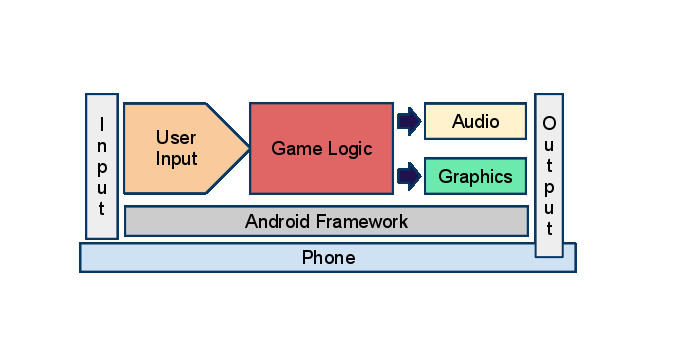

I will try to give you my understanding of it. The following diagram represents a game architecture.

Game Engine Architecture

In the schema above you see the Android OS running on the Phone and everything on top of that.

The input is the touch-screen in our case but it can be a physical keyboard if the phone has one, the microphone, the camera, the accelerometers or even the GPS receiver if equipped.

The framework exposes the events when touching the screen through the View used in our Activity from the previous article.

The User Input

In our game this is the event generated by touching the screen in one of the 2 defined control areas. (see Step 1 - the coloured circles). Our game engine monitors the onTouch event and at every touch we record the coordinates. If the coordinates are inside our defined control areas on the screen we will instruct the game engine to take action. For example if the touch occurs in the circle designated to move our guy the engine gets notified and our guy is instructed to move. If the weapon controller circle is touched the equipped weapon will be instructed to fire its bullets. All this translates to changing the actors' states that are affected by our gestures aka input.

I have just described the Game Logic part which follows.

Game Logic

The game logic module is responsible for changing the states of the actors in the game. By actors I mean every object that has a state. Our hero, droids, terrain, bullets, laser beams etc. For example we touch the upper half of the hero control area like in the drawing and this translates to: calculate the movement speed of our guy according to the position of our movement controller (our finger).

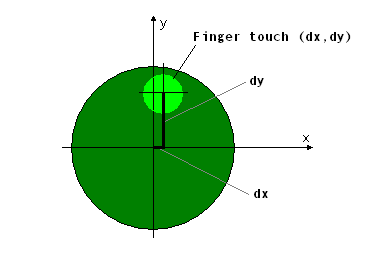

User Input - Finger touch on controller area

In the image above the light green circle represents our finger touching the control area.

The User Input module notifies the Game Engine (Game Logic) and also provides the coordinates.

dx and dy are the distances in pixels relative to the controller circle centre.

The game engine calculates the new speed it has to set for our hero and the direction he will move.

If dx is positive that means he will go right and if dy is positive he will also move upward.

Audio

This module will produce sounds considering the current state.

As almost every actor/object will produce sounds in their different states and because the devices we'll run our game on are limited to just a few channels (that means briefly how many sounds can the device play at once) it has to decide which sounds to play.

For example the droid posing the biggest threat to our hero will be heard as we want to draw attention to it and of course we will need to reserve a channel for the awesome shooting sound of our weapon as it is much fun listening to our blaster singing.

So this is the audio in a nutshell.

Graphics

This is the module responsible for rendering the game state onto the display.

This can be as simple as drawing directly onto the canvas obtained from the view or having a separate graphics buffer drawn into and then passed to the view which can be a custom view or an OpenGL view.

We measure the rendering in FPS which stands for frames per second.

If we have 30FPS that means that we display 30 images every second.

For a mobile device 30 FPS is great so we will aim for that.

The only thing you want to know now that the higher the FPS the smoother the animation.

Just imagine someone walking and close your eyes for exactly one second.

After opening your eyes you will see the person in the position after one second.

This is a 2FPS. Watch them walk but keeping your eyes open and you'll see a fluid motion.

This is guaranteed to be a minimum of 30FPS but it is likely to be more, depending on your eyes.

If you have awesome receptors in pristine condition this could be 80-100 or more.

Output

The output is the result of both sound and image and maybe vibration if we decide to produce some.

Next we will set up our view and will try to make our first game loop which will take input from the touch screen.

We'll have our first game engine.